Beyond the Demo: What to Look for in an Enterprise AI Platform

Fit Over Features

Polished demos and long lists of capabilities can be compelling, but they only show/tell what an AI platform can do. The real challenge isn’t proving AI’s value; it’s operationalizing it at scale.

Enterprise AI success depends on asking the right questions. You need to understand how a given AI platform will perform in your organization–in production, across teams, and under real-world conditions. A feature checklist is useful, but knowing what strategic questions to pose to vendors is essential.

Start with the Basics

- Does it integrate with your existing systems and data infrastructure?

- How does it handle governance, security, and compliance for your industry?

- What deployment models and data residency options are available?

- Can it scale under real workloads (e.g. thousands or millions of users or transactions)?

- How are AI outputs grounded in real enterprise data and business context?

- What is the total cost of ownership at scale, not just initial licensing costs?

What to Look for in an Enterprise AI Platform

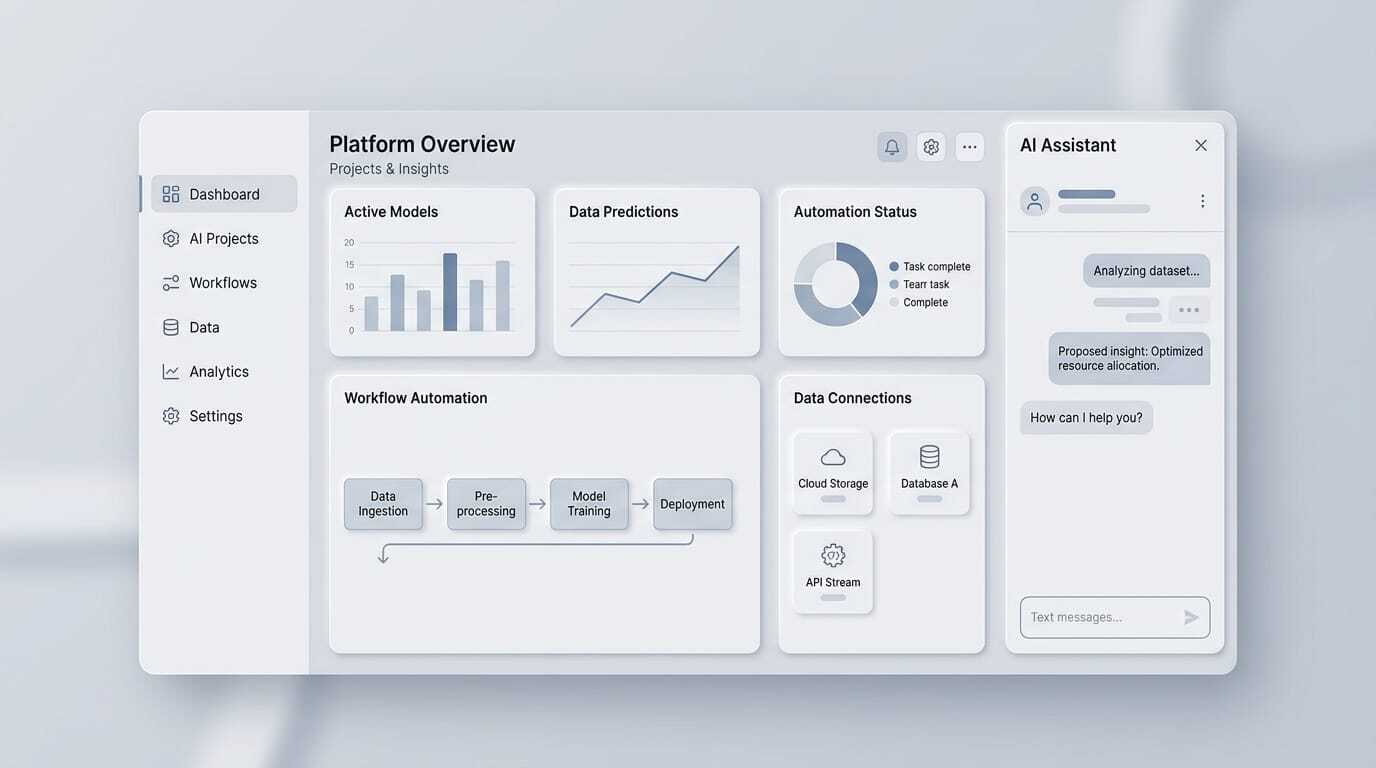

1. End-to-end Model Lifecycle Management

Enterprise AI is not a one-time setup; it’s an ongoing process where models must be versioned, trained, deployed, monitored, and retrained as data and conditions evolve. That makes lifecycle management critical.

Look for a platform that makes it easy to iterate and continuously refine models, move successful prototypes into production, and measure performance with confidence. You also need visibility into usage, performance, and outcomes over time through monitoring, analytics and feedback loops in order to improve accuracy, control costs, and adapt as business needs change.

Key words to look for are model versioning, experiment tracking, rollback, built-in evaluation frameworks, A/B testing, observability (into prompts, tool calls, and outputs), drift detection, and retraining workflows.

2. Scalability & Serving

Many AI solutions perform well in small pilots but break down at scale. Serving AI models reliably in production introduces challenges around latency, uptime, and traffic management. You need a platform with built-in scalability, one that can handle real workloads and grow alongside your business, or risk having to engineer scalability yourself later (adding unnecessary complexity and cost).

Evaluate whether the platform can handle high concurrency and peak traffic like thousands of simultaneous requests or multi-department usage without degradation; automate load balancing, routing, and infrastructure scaling; support flexible deployments (cloud, private VPC, on-prem) without limiting functionality; and meet enterprise standards for uptime, reliability, and resiliency. (Note that containerized and serverless infrastructures scale more easily, making them better suited for dynamic, high-demand environments.)

Scalability and serving infrastructure are also primary drivers of cost. Look for granular cost visibility, budget controls, usage alerts, and optimization techniques like caching, intelligent routing, and batching.

3. Integration with Enterprise (Data) Systems

AI is only as good as the data it can access, which calls for a platform that can integrate seamlessly with the systems and internal knowledge repositories you already use. In this case, look for native connectors to core systems (CRM, ERP, data warehouses, streaming pipelines, etc.), robust APIs, SDKs and webhooks for extensibility and custom integrations, and support for retrieval-augmented generation (RAG) and vector databases.

Note that integration depth and ease of setup are often inversely related. Shallow integration (e.g. manual data uploads, limited connectors) can accelerate deployment but risks creating siloed systems that lack context and quickly lose relevance. Deep integration embeds AI into workflows and grounds outputs in real enterprise data and context but also introduces greater complexity. The goal is to strike a balance between speed and depth based on your organization’s priorities and maturity.

4. Governance, Security, and Control

As AI systems increasingly influence critical decisions and workflows, organizations must prioritize governance, security, and compliance. Look for platforms that provide fine-grained role-based access control (RBAC) and identity management (SSO/SAML), audit logs for full traceability, data residency and private deployment options, and compliance with standards like SOC 2, ISO 27001, GDPR, and HIPAA.

Maintaining oversight as adoption grows is equally important. For generative AI in particular, evaluate support for retrieval pipelines, data freshness controls, retention and deletion policies, and source attribution to ensure outputs remain accurate, transparent, and aligned with governance standards.

5. Collaboration Across Teams

Enterprise AI is inherently cross-functional. Data scientists, software engineers, IT, security, and business teams must coordinate, which requires a platform that supports collaboration. Fragmented AI initiatives struggle to scale, so look for platforms that support shared workspaces, version control, role-based access, structured approval and publishing workflows, and human-in-the-loop systems.

Depending on available talent, you may want to prioritize platforms with user-friendly interfaces. No-code/low-code tools, templates, and sample agents can accelerate adoption, while SDKs and comprehensive documentation are important for more technical users.

It’s also worth evaluating the support ecosystem, including the onboarding process, availability of in-product guidance and training resources, and how quickly support teams can respond to issues.

6. Flexibility & Future-Proofing

Enterprise AI is evolving rapidly. Platforms must support experimentation today while remaining flexible enough to meet future needs, allowing teams to start small, test quickly, and scale as initiatives mature.

Key capabilities to look for include multi-model support (including bring-your-own-model), prompt versioning and model routing, structured outputs, tool use and agent orchestration, and guardrails for safety and consistency especially as AI systems become more embedded in critical workflows.

As agentic AI emerges as the next frontier, platforms that can execute multi-step workflows (not just generate responses) will deliver the most long-term value.

The Bottom Line

The right platform isn’t the one with the most features; it’s the one that works in production, at scale, and over time. Look beyond demos to understand how systems integrate, how models perform under real conditions, and how teams will manage them day to day.

AI also doesn’t operate in isolation. In practice, AI often connects with XR for visualization and training, digital twins for simulation and prediction, and IoT systems for real-time data. It’s part of a broader transformation strategy, which makes learning from real deployments critical.

The 2026 Augmented Enterprise Summit will bring together technology leaders from Walmart, Johnson & Johnson, RTX, Coca-Cola, and dozens more to share real-world experiences of deploying AI, XR, and digital twins at scale. Learn more or register here.

Featured image generated with AI.